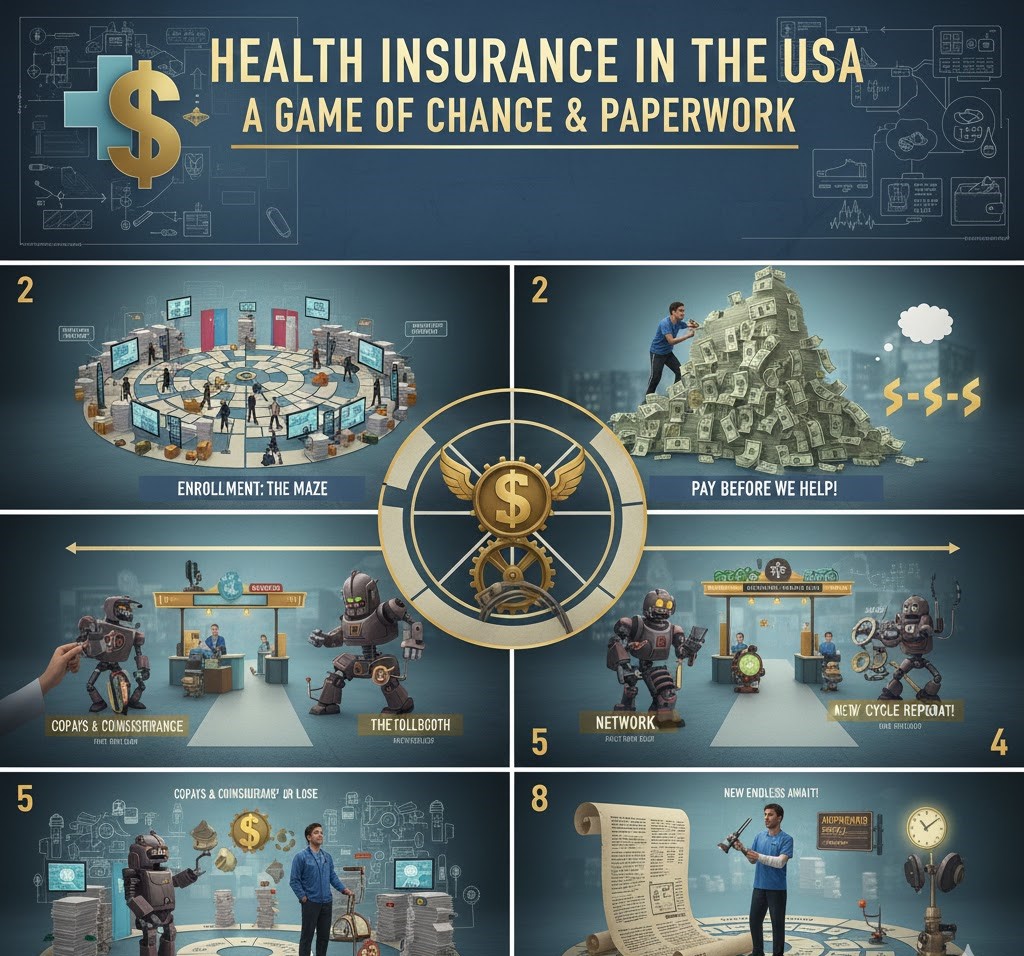

Introduction to Health Insurance in the USA Health insurance plays a very crucial role in healthcare in the United States. The healthcare issue [How Health Insurance Really Works in the USA] is the bane of millions of American lives, as a lot of people do not understand how the coverage, costs, and benefits work. In …

How Health Insurance Really Works in the USA 2026